NVIDIA GB200 NVL72 Cooling Requirements: What OEMs Need to Know

The NVIDIA GB200 Grace Blackwell Superchip represents a step-change in AI compute density — and a step-change in thermal management requirements. For server OEMs, system integrators, and data center operators designing GB200 NVL72 deployments, understanding the cooling architecture is not optional. This guide covers everything you need to know about GB200 thermal specifications, why liquid cooling is mandatory, and what to demand from your cold plate supplier.

1. GB200 Thermal Specifications

The NVIDIA GB200 Grace Blackwell Superchip integrates two Blackwell B200 GPU dies and one Grace CPU on a single module via NVLink-C2C. The combined thermal output sets a new benchmark for data center cooling requirements:

| Component | TDP (W) | Die Area (mm²) | Peak Heat Flux (W/cm²) | Cooling Requirement |

|---|---|---|---|---|

| B200 GPU (×2 per module) | ~500W each | ~814 mm² | ~600 W/cm² | Direct-to-chip liquid cold plate mandatory |

| Grace CPU | ~200W | ~600 mm² | ~330 W/cm² | Integrated into GPU cold plate assembly |

| GB200 Module Total | ~1200W | Combined | Peak: 600+ W/cm² | Liquid cooling only |

| NVL72 Rack (72 GPUs) | 120kW+ | 36 modules | Rack-level | Liquid-cooled rack with CDU mandatory |

2. Why Air Cooling Cannot Work for GB200

The physics of air cooling impose hard limits that no fan or airflow optimization can overcome at GB200 power densities:

- Air cooling rack limit: Even with high-CFM fans and optimized airflow, air cooling is limited to 8–25kW per standard server rack. The GB200 NVL72 generates 120kW+ — 5× to 15× beyond this physical ceiling.

- Die heat flux: Air cooling heatsinks top out at 5–15 W/cm² effective heat flux. The GB200 GPU die operates at 500–600 W/cm² — 40–100× beyond air cooling capability.

- Thermal resistance: Air-cooled heatsinks achieve Rth of 0.1–0.3 °C/W. At 1200W, this produces 120–360°C above ambient — far exceeding the GB200’s maximum junction temperature.

- Fan noise and power: Even if air cooling were thermally possible, the fan power required to move sufficient airflow through a 120kW rack would consume 20–30% of the rack’s compute power and generate 90+ dB of noise.

NVIDIA has officially confirmed that GB200 NVL72 requires liquid cooling. This is not a design preference — it is a mandatory architectural requirement.

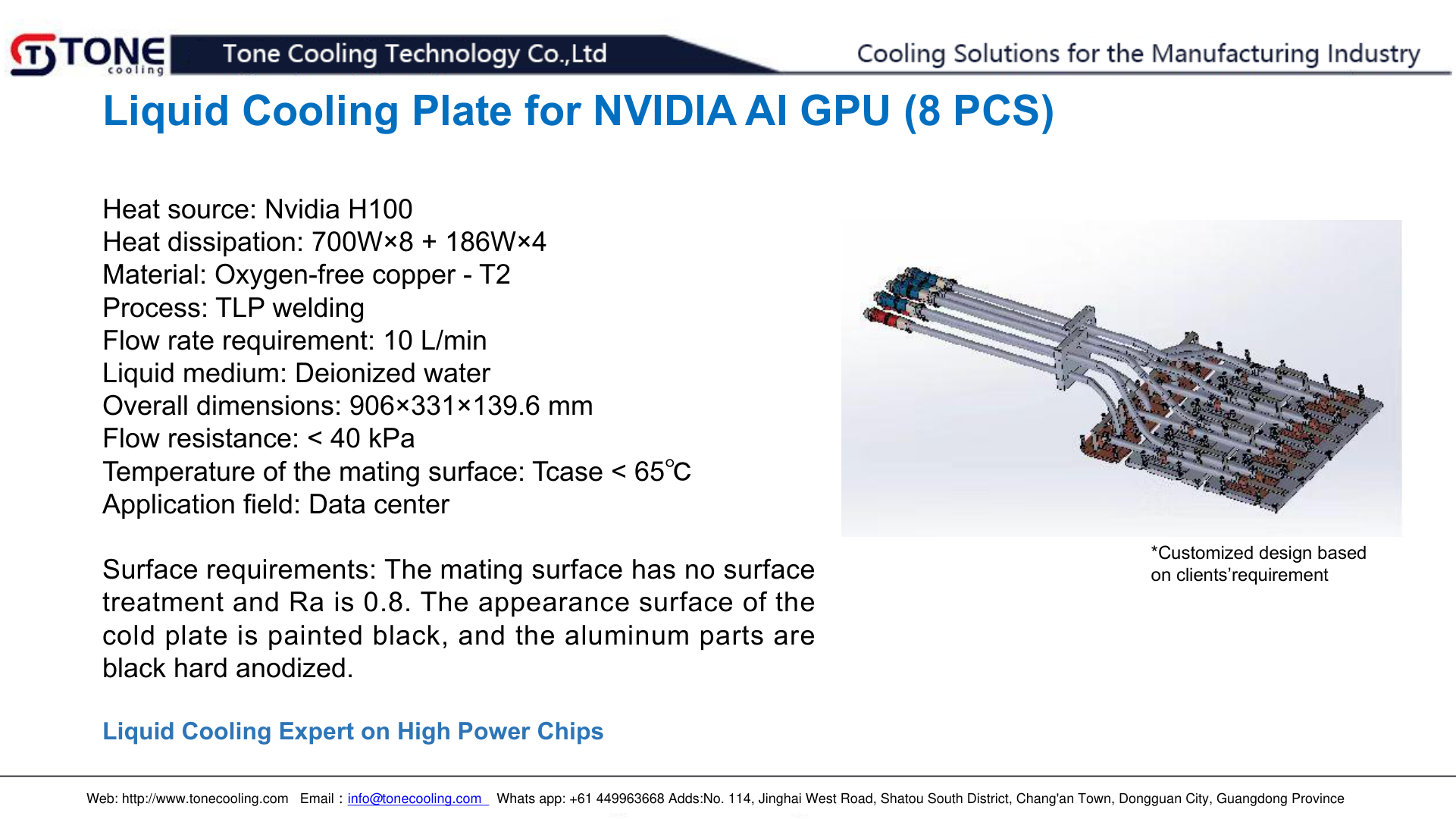

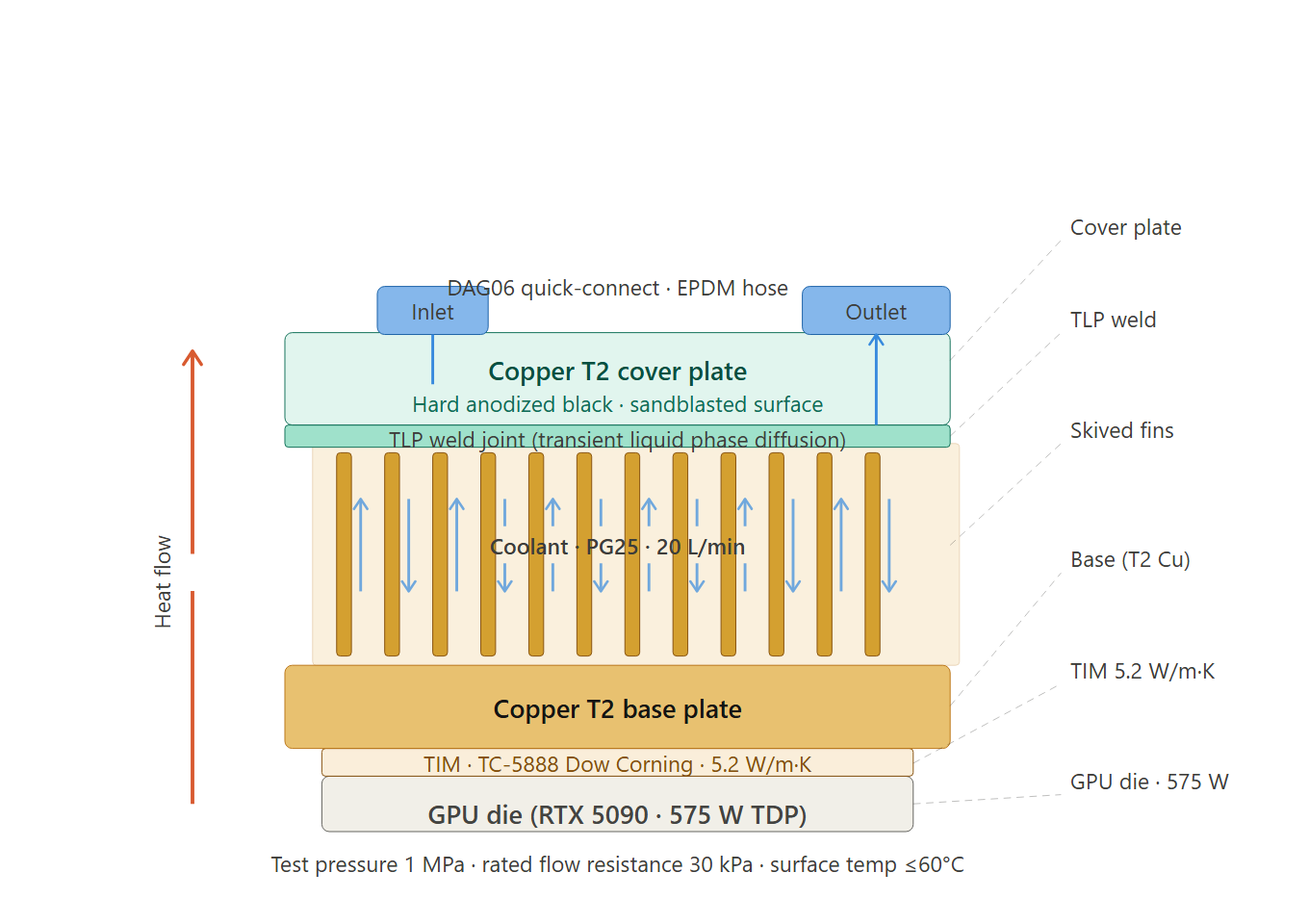

3. Cold Plate Design Requirements for GB200

Designing a cold plate for the GB200 demands precision across thermal, mechanical, and materials domains:

Thermal Design Requirements

- Must dissipate up to 1200W per GB200 module with coolant inlet at 30–45°C

- Target thermal resistance: ≤0.03 °C/W from cold plate base to coolant bulk

- Micro-channel or turbulator internal fin design to maximize heat transfer coefficient

- CFD simulation required to validate flow distribution uniformity across the die footprint

- Minimum coolant flow rate: 2–3 L/min per module

Mechanical & Interface Requirements

- Contact surface flatness: ≤0.05 mm over the die contact area

- Surface roughness: Ra ≤ 0.8 μm to ensure minimal thermal interface material (TIM) bond-line variation

- Must accommodate NVIDIA’s specified mounting hole pattern and torque specifications (±10% clamping force)

- Manifold port configuration must align with NVL72 rack plumbing architecture

- Operating pressure: up to 6 bar; burst pressure: 12 bar minimum

Material Requirements

- Copper (C1100 or C1020): Required for maximum thermal conductivity at the die interface. Vacuum-brazed construction.

- All wetted surfaces must be compatible with 50/50 EGW coolant per ASHRAE W55 water quality standards

- RoHS compliance required for all materials

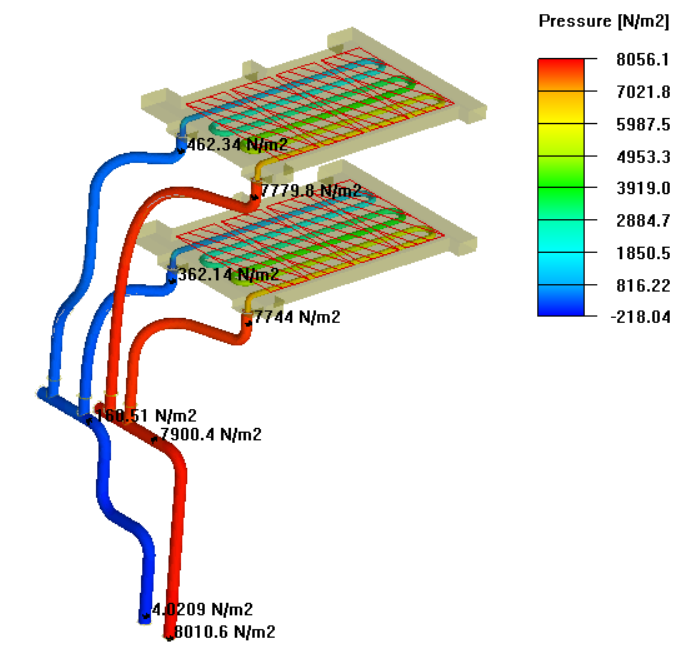

4. NVL72 Rack-Level Cooling Architecture

The GB200 NVL72 rack is not just a collection of servers — it is a tightly integrated compute fabric with a unified cooling architecture:

- 36 GB200 compute trays: Each tray contains one GB200 module (2× B200 GPU + 1× Grace CPU). Each tray has a dedicated cold plate assembly.

- Rack manifold system: All 36 cold plates connect to a common supply and return manifold within the rack. Manifold design must ensure balanced flow distribution (±5% flow rate per branch).

- Coolant Distribution Unit (CDU): Rack-external CDU supplies coolant at controlled temperature and flow. Typical CDU capacity: 150–200kW for NVL72 deployment.

- Secondary cooling loop: CDU connects to building chilled water or evaporative cooling tower. Water-cooled CDUs offer best PUE (Power Usage Effectiveness).

- NVLink switch modules: Additional cooling required for NVLink switch ASICs. Air cooling is typically used for switch modules; some OEM designs integrate water cooling.

5. What OEMs Need from Cold Plate Suppliers

OEMs designing GB200 NVL72 servers need more than a component — they need a thermal engineering partner. Here’s what to require from your cold plate manufacturer:

| Requirement | Why It Matters | ToneCooling Capability |

|---|---|---|

| CFD thermal simulation | Validate thermal performance before cutting tooling | Yes — full CFD flow and thermal analysis |

| 7–15 day prototype lead time | Program milestones require fast iteration cycles | Yes — MOQ 5 pcs, 7–15 business days |

| Vacuum-brazed copper construction | Maximum thermal conductivity and void-free bond | Yes — C1100/C1020 vacuum brazing |

| Pressure test to 12 bar burst | Coolant leak in a live rack is catastrophic | Yes — 100% hydrostatic and helium leak test |

| PPAP Level 3 documentation | Required for OEM supplier qualification programs | Yes — full PPAP package available |

| Manifold integration support | Rack manifold must balance flow to all 36 cold plates | Yes — custom manifold design and co-engineering |

ToneCooling’s GB200 liquid cold plate is engineered specifically for the Grace Blackwell Superchip architecture. Learn more on our NVIDIA GB200 Liquid Cold Plate product page, or explore our complete AI Server Liquid Cooling solutions.

References & Further Reading

NVIDIA GB200 NVL72 Cooling Requirements: Thermal Specifications

Understanding NVIDIA GB200 NVL72 cooling requirements is essential for OEMs designing next-generation AI server racks. Each GB200 Grace Blackwell Superchip dissipates up to 1200W, requiring direct-to-chip liquid cooling with thermal resistance below 0.02°C/W at the cold plate interface.

The NVIDIA GB200 NVL72 cooling requirements specify a rack-level thermal budget of approximately 120kW across 72 GPU modules. This demands carefully designed manifold systems with balanced flow distribution — typically 2.0-2.5 L/min per GPU cold plate with total system pressure drop under 100 kPa.

ToneCooling manufactures GB200-compatible cold plates with validated thermal performance. Request a quote for your NVL72 deployment.

Frequently Asked Questions

What are the thermal requirements for NVIDIA GB200 NVL72 cooling?

Why can’t air cooling be used for NVIDIA GB200 servers?

What cold plate specifications do OEMs need for GB200 NVL72 designs?

How long does it take to get a prototype GB200 liquid cold plate from ToneCooling?

Building a GB200 NVL72 Server? Talk to Our Engineers.

ToneCooling manufactures direct-to-chip liquid cold plates for NVIDIA GB200, GB300, H200, and AMD SP5 platforms. CFD simulation, prototype in 7–15 days. Vacuum-brazed copper. ISO 9001.