Introduction: The “Heat” Crisis in Data Centers — Why Liquid Cooling Is No Longer Optional — Data center liquid cooling

The arrival of large-scale AI training, inference clusters, and ever-more powerful GPUs has fundamentally changed the thermal landscape of modern data centers. High-end accelerators such as NVIDIA H100-class devices can have thermal design powers (TDPs) that push beyond 500–1000W per device. When racks are densely populated with such devices, the combined heat flux per rack can exceed what traditional air-cooled infrastructure was designed to handle.

Consequence: air-cooled systems increasingly hit their thermal ceiling — higher ambient temperatures cause throttling, performance loss, and reduced hardware lifespan. Worse, significant energy is spent not on computation but on moving air and running chillers. In short: compute capability becomes constrained by cooling capability.

Liquid cooling, with liquids’ far superior specific heat and thermal conductivity compared to air, is rapidly moving from niche to mainstream. For organizations scaling AI workloads, liquid cooling is a strategic lever to increase density, reduce PUE (Power Usage Effectiveness), and realize meaningful operational cost savings.

Liquid Cooling Overview: A New Paradigm for Data Center Thermal Management — Data center liquid cooling

From Air to Liquid — The Physics That Justify the Shift — Data center liquid cooling

Air’s volumetric heat capacity is small; moving large amounts of heat via air requires massive airflow and large fans, which in turn consume power and create noise. Water and many engineered dielectric fluids, by contrast, carry more heat per unit volume and transfer heat much more effectively across surfaces. A comparison highlights this straightforward advantage:

- Specific heat and density: Water carries ≈4x the heat per mass and far more per volumetric flow than air.

- Thermal conductivity: Liquids conduct heat away from hot spots more efficiently, reducing thermal gradients and hotspots.

Core business values unlocked by liquid cooling include higher rack-level compute density, lower total energy consumption (lower PUE), and the potential for waste-heat recovery.

Two Dominant Routes: Liquid Cold Plates vs Immersion Cooling

In practical deployments, liquid cooling typically follows one of two patterns:

- Liquid cold plate (direct-to-chip) cooling:

-

- Cold plates make direct thermal contact with CPUs, GPUs, and other hot components. Coolant flows through custom channels inside the cold plate and then to the CDU (Cooling Distribution Unit) and heat rejection system.

-

- Immersion cooling:

-

- Entire servers, or server assemblies, are submerged in dielectric fluids (single-phase or two-phase), allowing direct fluid contact with all components for highly uniform cooling.

-

Deep Comparison: Liquid Cold Plate vs Immersion Cooling

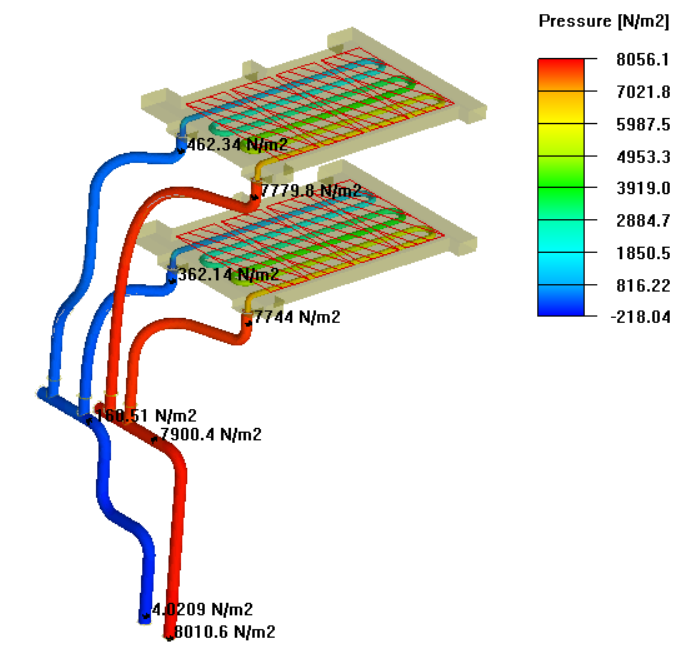

The following table summarizes the practical trade-offs to help operators choose the best path according to cost, complexity, and objectives.

| Comparison Dimension | Liquid Cold Plate | Immersion Cooling |

|---|---|---|

| Operating principle | Direct contact via cold plate channels attached to CPUs/GPUs; coolant carries heat to CDU/radiator. | Servers immersed in dielectric fluid; components directly exchange heat to the bath (single- or two-phase). |

| Retrofit difficulty | Medium — server modifications needed but can often be phased into existing infrastructure. | High — requires custom enclosures, tanking, and significant room changes (floor loading, leak containment). |

| Cooling efficiency | High — targeted cooling, excellent for high-power chips. | Very high — whole-system uniform cooling with minimal hotspots. |

| Cost structure | Moderate initial investment; scalable deployment; CDU and piping costs apply. | High initial investment in tanks, pumps, and layout; long-term PUE can approach ~1.02 in ideal scenarios. |

| Maintenance | Familiar maintenance workflows with some new steps (quick disconnects, leak monitoring). | New workflows (server handling from the bath); however no dust and lower corrosion risks in dielectric fluids. |

| Best use cases | AI servers, HPC clusters, phased upgrades to existing data centers. | New high-density deployments, hyperscale HPC centers, crypto mining farms seeking extreme density. |

Core Technologies: Building Blocks of a Data Center Liquid Cooling System

Cooling Fluids — The System’s “Blood”

Choosing coolant is foundational. Options include deionized water, water-glycol mixtures, engineered fluorinated fluids (dielectric), and mineral or synthetic oils. Selection factors:

- Thermal performance: specific heat, thermal conductivity, and viscosity.

- Electrical properties: dielectric vs conductive — determines immersion suitability.

- Environmental impact: GWP (global warming potential), recyclability, disposal rules.

- Cost and supply chain: long-term availability and handling costs.

Quick-Connects and Leak-Free Interfaces

Quick connectors must enable hot-swap capability while ensuring zero leakage and minimal pressure drop. Reliable mechanical designs and pressure-tested seals reduce risk and speed maintenance operations.

CDU — The Heart of a Liquid Loop

The Cooling Distribution Unit conditions and routes coolant, controlling flow rates, temperatures, pressure and providing isolation between primary and secondary loops. A well-designed CDU supports redundancy, filtration, and monitoring — critical for enterprise-grade reliability.

Heat Rejection — Dry Coolers and Cooling Towers

Final heat rejection can be handled via air-cooled dry coolers, evaporative cooling towers, or hybrid systems. Aligning CDU setpoints and heat rejection capacity with local climate is essential to maximize free cooling and minimize compressor-based chiller runtime.

Cost-Benefit Analysis: How Much Is Cooling Really Costing You?

Hidden Costs of Air Cooling

Operators often underestimate the concealed costs associated with air cooling:

- Power for fans and CRAC/CRAH units.

- Compressor energy for chilled water loops.

- Reduced compute throughput due to thermal throttling leading to longer job times.

- Poor space utilization because of lower allowable rack density.

TCO Model for Liquid Cooling

When evaluating TCO, account for initial capital expenditures (cold plates, CDUs, piping, installation) and offset them with operational savings: pump power replacing massive fan and chiller loads, denser compute on the same footprint, and potential revenue uplift from higher throughput.

PUE Improvements and ROI

Liquid cooling projects routinely reduce PUE from typical air-cooled baselines (≈1.4–1.8 in many legacy facilities) to ~1.1 or lower. Consider a 1 MW data center example:

If air-cooled PUE = 1.5, total site energy = 1.5 MW for 1 MW of IT load. If liquid-cooled PUE = 1.1, site energy drops to 1.1 MW — a 400 kW savings. Over five years this can translate to substantial utility cost reductions that often recover incremental capital spend within 2–4 years depending on energy prices and project scale.

Beyond direct savings, liquid cooling enables higher rack density: more compute per square meter increases revenue potential for cloud or HPC providers — a compounding financial benefit beyond raw energy savings.

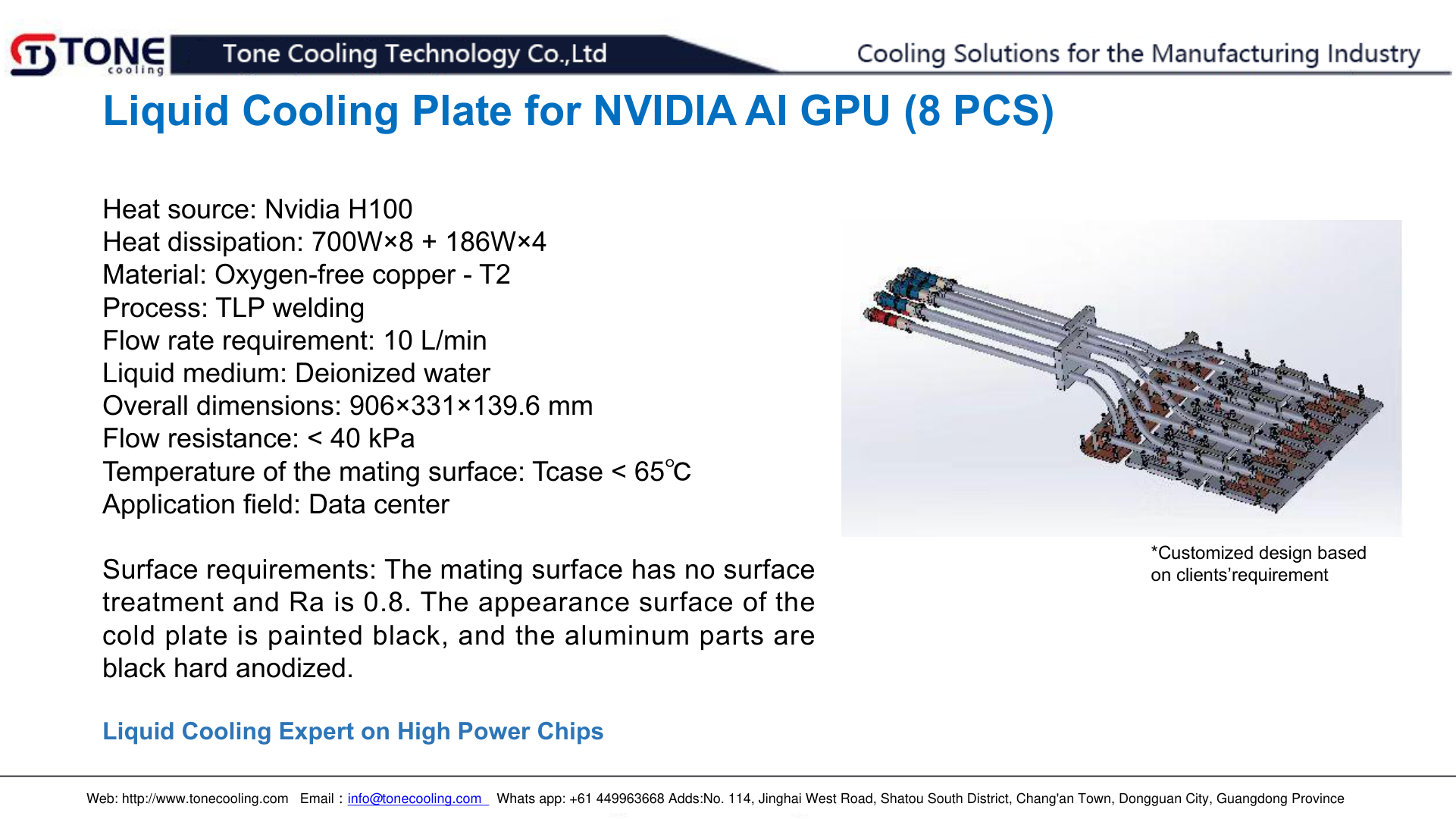

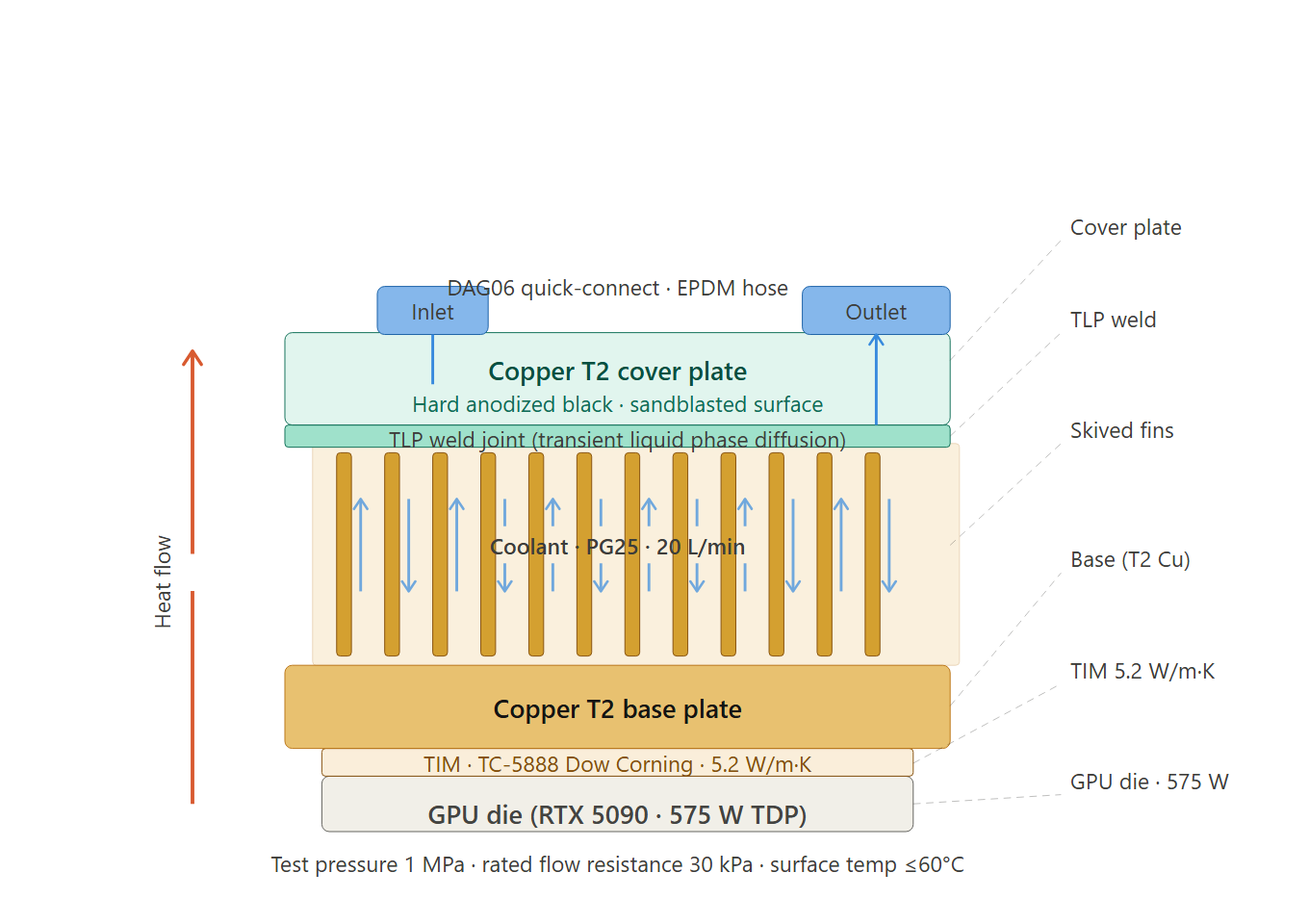

AI Compute Driver: Why Liquid Cold Plates Are Becoming Standard for AI Servers

AI GPU Cooling Challenges

Modern AI training uses tightly-coupled multi-GPU nodes with continuous high-power draws during long training epochs. Thermal spikes, sustained high loads, and concentrated hot spots make traditional cooling inadequate.

How Liquid Cold Plates Unlock Peak AI Performance

- Maintain boost clocks: Direct cooling prevents thermal throttling, ensuring GPUs maintain peak frequency for longer durations.

- Increase rack density: With efficient cooling, more GPUs can be packed per rack without violating thermal or electrical constraints.

- Decrease training time: By avoiding throttling and sustaining higher performance, model training time shortens, enabling faster iteration and cost per training job reduction.

Major cloud providers and hyperscalers have publicly signaled liquid cooling roadmaps — reflecting the industry consensus that liquid cooling is essential for future AI infrastructure.

Real-World Case Studies: Liquid Cooling at Scale

Case 1: Large Internet Company — AI GPU Farm

Challenge: Thousands of GPUs generating enormous heat and requiring sub-1.2 PUE target.

Approach: Cold-plate-based direct-to-chip cooling with centralized CDUs and coordinated dry-cooler arrays.

Outcome: Achieved PUE ≈ 1.15, increased sustained compute throughput by ~20%, and dramatically lowered cooling OPEX.

Case 2: National Supercomputing Center

Challenge: Achieve exascale-class sustained performance while managing extreme heat fluxes.

Approach: Hybrid architecture combining cold plates for chips and selective immersion racks for ultra-dense nodes.

Outcome: Broke prior thermal bottlenecks and enabled higher sustained compute without proportional increases in facility power.

Implementation Considerations and Risk Mitigation

Retrofit vs. Greenfield

Cold-plate deployments are often ideal for retrofits because they can be implemented incrementally with minimal structural changes. Immersion is typically a greenfield or major reconfiguration approach due to floor and containment requirements.

Leak Risk Management

Concerns about leaks are common but can be mitigated via high-quality quick-connects, double-containment piping where required, leak detection sensors, and modular CDU redundancy. Robust commissioning and emergency isolation procedures further eliminate practical risk.

Operations & Maintenance

Modern CDUs are modular and often hot-swappable. Standardized maintenance playbooks, staff training, and remote monitoring (telemetry on flow, temp, conductivity) are essential. Many operators find that liquid-cooled environments reduce dust-related failures and extend component lifetimes.

Future Trends: Where Data Center Liquid Cooling Is Heading

- Standardization & Modularity: Industry bodies and consortia are creating connector and interface standards to simplify cross-vendor deployments and accelerate adoption.

- Chip-level and full-stack liquid cooling: Cooling expanding beyond CPU/GPU to memory, NVMe, and power electronics for holistic thermal control.

- AI-driven operations: Predictive control loops using AI will dynamically adapt flow and setpoints to maximize free cooling and minimize energy use.

- Waste heat reuse at scale: Liquid return temperatures of 50–60°C enable district heating, agricultural uses, or industrial processes — turning a cost center into a value opportunity.

FAQ: Common Concerns About Data Center Liquid Cooling

Q1: How disruptive is deploying liquid cooling in an existing data center?

A: Cold-plate approaches can be phased in with manageable disruption—typically limited to rack-level changes and CDU install. Immersion requires more planning and physical modifications.

Q2: Are leaks a realistic showstopper?

A: With engineered quick connects, quality manufacturing, redundancy, and automated leak detection, the residual risk is extremely low and manageable within standard data center risk frameworks.

Q3: How often must coolant be changed or maintained?

A: It depends on the fluid. Deionized water requires monitoring and occasional replacement/conditioning (1–2 years common). Fluorinated dielectric fluids often require far less frequent service (multi-year intervals).

Q4: Can liquid cooling really cut costs by 50%?

A: While the “50%” outcome depends on baseline PUE, energy prices, and scale, many projects report OPEX reductions in the 30–60% range for cooling-related costs — especially where free cooling is exploited and rack densities are raised.

Conclusion

Data center operators face a choice: either continue to invest in ever-larger air-moving and chiller infrastructure or embrace liquid cooling to increase density, lower PUE, and enable next-generation AI workloads. For many organizations scaling AI or HPC, liquid cooling is the pragmatic and strategic answer. Whether via direct cold plates for staged rollouts or immersion for extreme-density facilities, liquid cooling provides a pathway to sustainable, high-performance compute.

Contact Tone Cooling for Custom Data Center Liquid Cooling Solutions

Tone Cooling Technology Co., Ltd. is a leader in custom liquid cold plate and data center cooling solutions. Founded in 2004, Tone Cooling combines patented manufacturing processes, advanced CFD design, and decades of thermal engineering experience to deliver reliable, high-performance solutions for AI servers, HPC, and hyperscale data centers. Contact our engineering team to evaluate your facility, model ROI, and design a phased deployment plan tailored to your needs.

Visit: https://tonecooling.com — or contact our sales and engineering team for a tailored consultation.

For industry standards and best practices, refer to ASHRAE.

Frequently Asked Questions

Does ToneCooling offer OEM and ODM services?

Yes. ToneCooling provides full OEM and ODM services including custom design, prototyping, thermal simulation, and volume production. We serve customers in North America, Europe, and Asia-Pacific with engineering support and samples within 2–4 weeks.

What materials are used in ToneCooling liquid cold plates?

ToneCooling manufactures cold plates in aluminum (6061/6063), copper (C1100/C1020), and stainless steel. Aluminum FSW cold plates are ideal for high-volume EV and industrial applications, while copper brazed cold plates provide maximum thermal conductivity (398 W/m·K) for high heat flux electronics.

What is the typical lead time for custom cold plates?

Prototype samples are delivered within 2–4 weeks. Production orders typically ship within 4–6 weeks after sample approval. ToneCooling responds to all quote requests within 24 business hours.

Get a Custom Thermal Solution from ToneCooling

ToneCooling is a professional liquid cooling solution provider specializing in custom cold plates, AIO coolers, and advanced thermal management systems. With ISO 9001:2015 certified manufacturing, we deliver prototype samples within 2–4 weeks. Contact ToneCooling today for a free consultation and quote — we respond within 24 business hours.

Related ToneCooling Resources

- Liquid Cold Plates Product Line

- Request a Custom Cold Plate Quote

- Technical Resources & Design Guides

Industry References & Standards

Need a Custom Liquid Cold Plate?

Data Center Liquid Cooling Solutions is a high-performance thermal management solution engineered by ToneCooling for demanding applications.

ToneCooling engineers design thermal solutions for your specific requirements. Get a detailed response within 24-48 hours.

Data Center Liquid Cooling Solutions is a critical component in modern thermal management. ToneCooling engineers this solution for AI servers, data centers, EV batteries, and power electronics requiring high-performance liquid cooling.

Why Choose ToneCooling for Data Center Liquid Cooling Solutions

ToneCooling has manufactured over 50,000 data center liquid cooling solutions units for global OEM customers. Our data center liquid cooling solutions production features vacuum brazing furnaces below 10⁻⁴ mbar, FSW machines with ≤0.02mm flatness, and helium leak detection at 10⁻⁸ mbar·L/s. Every data center liquid cooling solutions undergoes 100% pressure testing at 25 bar.

Our engineering team provides free data center liquid cooling solutions design consultation, CFD simulation, and rapid prototyping in 7-14 days. Production data center liquid cooling solutions orders ship in 4-6 weeks under ISO 9001:2015 quality management.

Need a Custom Liquid Cold Plate?

ToneCooling engineers design thermal solutions for your requirements. Response within 24-48 hours.

Last Updated: 2026-04-08

DR Kevin, Thermal Engineer, ToneCooling

Need a Custom Liquid Cold Plate?

Tell us your thermal requirements. Engineering team responds within 48 hours with design proposal and quotation.

Request a Quote →MOQ 5 pcs • Prototype 7-15 days • ISO 9001 Certified